The gap between Standard Definition (SD) screens and the introduction of High Definition (HD) screens was an eternity. It was then roughly a decade after wide adoption of HD that we recently started seeing Ultra High Definition (UHD), also known as 4K, come onto the scene. And just as consumers unbox those brand new UHD screens to bask in the awe of their crisp and vivid images; it’s merely a stepping stone to much greater resolutions.

Even while UHD based screens were just entering stores, 5K screens were already starting to dribble out into the PC monitor market, and much talk of even greater resolutions were already making the rounds in electronics circles. In fact, while UHD 4k screens were being launched, you could already buy 8K screens (7680×4320 pixels), and have a handful of options to choose from. Admittedly you’d be convulsing with the sticker shock at any of the 8K options, but it ain’t cheap mass producing decent yields of devices with the precision of 33,177,600 pixels. However, prices inevitably go into free fall as manufacturing processes mature. 4K was prohibitively expensive, now you can pick up a fully featured 50″ 4K LED smart TV from a major retailer like Target for $650, expect the same for 8K in the very near future.

I’m guessing Sony sells 4K televisions at this electronics retailer

So onward and upward to greater and greater resolutions even beyond 8K? Not necessarily. Eventually, screen manufacturers will start to bump up against similar physical and material limitations that are starting to plague PC makers. Component manufacturers such as CPU and GPU makers are close to hitting a wall on how finely they can lithograph circuitry into silicon based chips. Current high-end consumer market processors, such as Intel’s i7-6700 are based on a 14nm (a nanometer is one billionth of a metre) lithography, which really is pushing the limit. Intel and the like will be able to truck along for years more, but a new direction in processors will soon be compulsory in order to further scale performance and capability.

The rapid rate of screen technology

innovation in the past several years has been

astonishing, and isn’t slowing.

One would say that’s the natural transition point to quantum computing, and the trajectory of it’s progress as the replacement processing technology seems to be on track. In fact, it seems highly likely the overhaul of both screen and processor technologies will converge at roughly the same point in time, with that convergence allowing for the computational power needed to support such advanced display methods.

Also worth considering, 8k resolution is in close proximity to the level of detail the human eye can perceive, going much beyond that will start having diminishing returns. There will still be room for further resolution gains very likely well into the double digits that will have discernible improvement on clarity of the image, but we’ve somewhat hit the sweet spot for now. The pursuit of perfection and a premium product will always have companies pushing beyond what is considered the general human ranges.Audiophiles demand beyond the “normal” range of audio reproduction.

Audiophiles demand beyond the “normal” range of audio reproduction

Think to audio technology, we could have all easily lived with audio equipment that reproduced sound in the range of 20Hz-20,000Hz. That’s generally what most people’s ears can perceive (with a wider range in your youth and a swift drop off as you ferment), the range would be just fine for a bulk of the population. However to refine the sound to audiophile standards and for those with hearing not ravaged by age and years of all-night raves, audio equipment has been produced that goes beyond that range. For example, professional studio headphones exist that faithfully reproduce 5Hz-40,000Hz and some reference grade headphones even claim 5Hz-54,000Hz. This extended range gives such equipment a truly accurate sound where every little nuance of the recorded material is revealed that would otherwise be missed if left at the “good enough” range for the general populous. Screen resolution will be no different, 8K will come and go, and the pursuit of perfection continues.

Infinitely Variable Screens

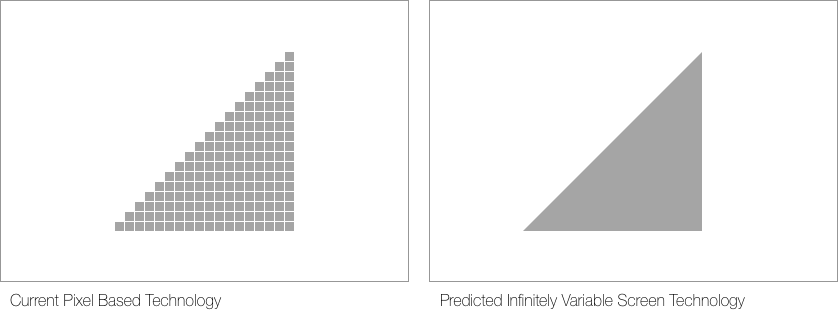

This is where I predict we possibly transition to what I’ve termed “Infinitely Variable Screen” technology… The expression of moving images in the form of rapidly lit patterns of colored pixels, will eventually give way to a form of infinitely scalable ‘vector like’ rendering system.

In such a system, a single recorded format would support any resolution thereon, rather than being constrained to a fixed recorded resolution. Think of such a format being an incredibly high-resolution, high frame rate, high color depth, full motion video version of the current SVG (Scalable Vector Graphic) format designers currently use for rendering illustrations on web pages. While the current SVG format and the future proposed format would ultimately bare little resemblance to each other, they’re still essentially rendering images using vector coordinates. The complexity comes when you render lifelike images, in motion, that the horsepower needed to do so would be tremendous.

No longer will screens be principally

defined by the density and speed

of a matrix of fixed pixels.

This would most certainly mean the very way in which video is shot would also need to change, possibly to a modernised version of what film was originally. Film wasn’t based on pixels, although one could say the composition of the material somewhat defined the theoretical “resolution” of what could be captured. Current photo sensors would need to give way to a new way of capturing light into solid image data which is then in turn translated to high resolution vector nodes that can be positionally adjusted as motion is captured.

The Reality of Virtual Reality

Pixel based screen technology simply isn’t ideal for virtual reality. The proximity of your eyes to the screens mean your eyes can identify the separation between pixels unless considerable increases in pixel density take place. Current technology often creates what people describe as a “screen door effect” to what they see, similar to when looking outside through the screen door of a house and noticing the fine lines running through the field of view.

Your smartphone serves double duty as VR engine with Samsung Gear VR

In fact, VR stands the greatest chance of truly benefiting from Infinitely Variable Screen technology. It solves one of the technology’s greatest hurdles to mass acceptance, getting the screen right. A truly immersive and believable experience depends on a display absent of frame lag, tearing, perceptible pixels and other technology limitation markers that jerk the user back into reality.

And None of it Matters

It’s amazing that after such a long time to get from SD to HD screens, it is now in fact screens that are waiting for everything else to catch up with them. Typical broadband connectivity, set top boxes, broadcast channels and video mediums of your choice are simply not up to the task of displaying at native 4K resolution, let alone 5K or 8K. Even with PCs, you’d suspect they’d be in the best position to play nicely with 4K, yet the average installed graphics hardware in PCs are woefully underpowered to render gaming graphics at that resolution.

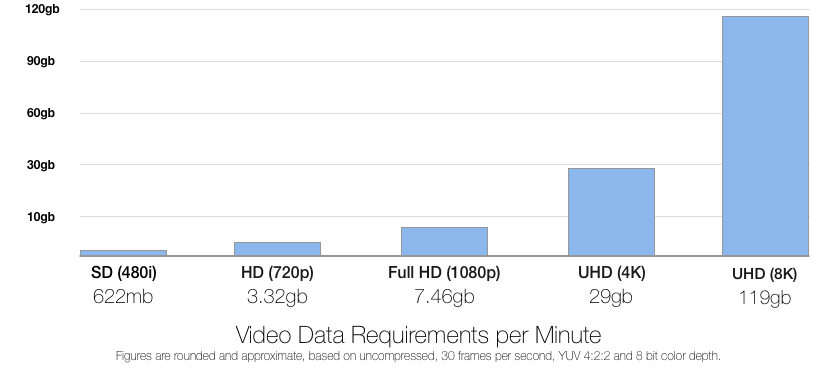

Displaying 4K video gobbles up an eye watering 40GB compressed and 700GB raw uncompressed per hour (numbers vary considerably depending on a variety of factors such as colour depth, frame rate, compression codec and so forth, these are only rough figures). This would blow through a typical person’s entire month of Internet service provider monthly download caps. Not to mention the gross amount of patience needed to download such volumes of data over today’s typical connection speeds. Curious what 8K video would blow through? Try 119GB per minute and if you ratchet up the frame rate and colour depth from 30fps to 60fps and 8-bits to 16-bits respectively, you’re clearing almost half a terabyte per minute. You can strike out any thoughts of real-time media streaming at such resolutions for the foreseeable future, and we’ve shifted as a society off of physical mediums to streaming videos – so what to do?

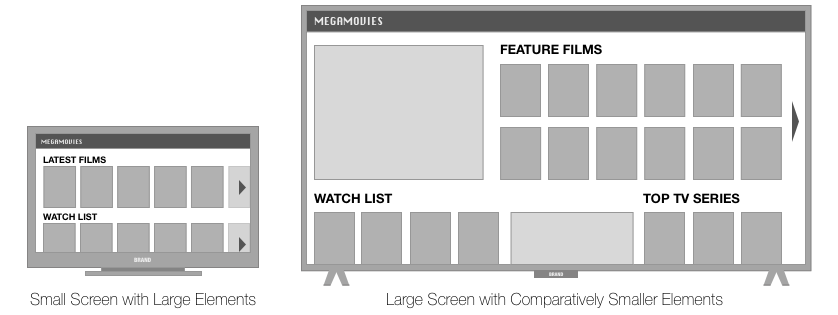

Interface Design, Stuck in a Rut

Add to this, many of the latest generation devices such as streaming media players and set top boxes are starting to have 4K compatibility and output 4K video, but they’ve completely neglected rethinking how to repurpose the user interface to optimise for such real estate. Thus you end up with oversized movie cover art, 12 movie covers when 36 would fit comfortably; unnecessarily restricting and truncating titles and so forth. With 8K, again, current devices would look laughable connected to them. So in addition to the video stream itself, the way interfaces scale to accomodate infinitely variable resolutions and physical screen sizes needs to be considered.

None of the latest crop of devices consider context of use in order to best configure their interface. The interface layout looks identical between HD and UHD; identical if you have a 32″ screen or a 90″ screen; identical if you’re 10 feet away or 30 feet away from the screen – it’s literally oblivious to the world around it. And that shouldn’t be the case in 2016.

If scaling video is in the cards, then scaling menus and media libraries most certainly is. Modern HDMI video interconnects are capable of transmitting a wealth of video display specifications back to the outputting device and many smart TVs are equipped with cameras and sensors. Combining all of this data to drive how the interface is configured makes perfect sense, yet is rarely if ever implemented in current devices.

How It Could Work

This is the greatest question mark of the whole prediction… how will these vector based images be captured, stored and ultimately displayed, an entire supporting ecosystem would need to be developed, deployed and widely adopted.

Recording and Playback

Video recording will need to optically capture in a manner closer to original film cameras where a material may respond to patterns of light and somehow translate that into storable data. While film seemed to produce solid images, it was still physically limited to the density of chemical particles reacting to light (think of them as pre-digital pixels). The technology for Infinity Variable Screen recording would likely work similarly but at a more granular nano level.

Potentially lasers (yes, freakin’ lasers) offer the precision, rendering speed and brightness to produce such moving images. Very likely though, a new substance or set of materials and surrounding technologies will be engineered to form solid images from vector data. Advances in materials has been tremendous over the past few years, this will continue to accelerate, a lot. All very much in the realm of possibility, what’s more up in the air is how to get that content to your screen…

Handling Copious Amounts of Data

The bright side of IVS technology is that instead of having to store the state of millions of pixels per frame of motion, vector coordinate data could prove to be highly efficient when compressed appropriately. That being said, the storage and transmission needs would still be at an incredibly grand scale compared to current technologies.

The challenge is getting that whopping amount of data to your screen

At the time that IVS technology becomes possible, video streaming and content that’s rich in spacial and fully surrounding scenes for native VR and AR applications would be ubiquitous. The assumption is that there would be a greatly improved supporting infrastructure, that like now, would never be quite fully keeping up with the technology, but at least be responding to the growing data appetite.

Storing and pushing around the web the likely terabytes of data IVS would need, sounds insurmountable now, but we’re merely in a temporary plateau of both that will start to massively accelerate in the coming years. The plateau exists largely due to commercial interests conflicting with consumer needs. Whether it be storage space on a device or bandwidth transferred across the Internet, capacities are intentionally limited to artificially create scarcity and inflate value. The reasons are usually to spur up-selling into more lucrative higher tier capacity options; push supplementary products such as cloud storage subscriptions; or avoid admittedly large infrastructure and R&D costs.

Storage

Such artificial storage limitations can easily be demonstrated buying a flagship iPhone 6s Plus for $749USD and having to contend with its minuscule 16GB of built-in storage and no expandability. By the time you’ve factored in the OS and its default shipping apps, a handful of your own favourite apps and an assortment of favourite songs, shooting 4K video with it’s camera onto the remaining storage would surely be a frustratingly limiting experience. It’s an impractically imposed limit, existing purely to sell higher capacity models and encourage cloud storage subscriptions, on an otherwise robust device.

16GB non-expandable capacity on a premium flagship phone in 2016? Seriously?

However as the initial R&D costs are paid down, reliable manufacturing yields increase and production costs drop for producing ever larger storage capacities – companies will be forced competitively to drop prices and vastly bump up capacities in such devices or someone else will. Think of how the price per Gigabyte has plummeted for mechanical hard disk drives over the past several years. 4 terabytes of data storage is now remarkably cheap due to those very same combination of factors.

Recent comments by storage and processor manufacturer Intel circulating in the press indicate the puny capacities of today’s solid state storage, which typically hovers in the 16GB to 512GB territory, will likely blow out to 15TB (yes, terabytes) in the next year or so. This is all thanks to new technologies in how storage devices are created, stacking the NAND to vastly increase capacity with little impact to cost of manufacturing nor the physical form factor of the device itself. Essentially, storage is at the start of what’s being coined ‘3D’ storage (and there’s plenty of trademark names that already play on this concept). And those 15TB capacities they predict are just a glimmer of what’s to come as they further perfect these new stacked storage manufacturing methods.

The form factor soon to hold double digit terabytes

Once that technology comes to life, likely starting out in enterprise applications and working its way down to the typical consumer, the gates will have opened. All competitors will create their “me too” products and we’ll have jumped from the gigabyte era of solid state storage to the terabyte era.

Transmission

Similarly to storage, much of the transmission technology exists, albeit in limited and sometimes prototype form, for us to all be enjoying breakneck gigabyte speeds and beyond, Google Fibre proves this in geographically limited instances. Many interdependent technologies would need to come together to form an infrastructure reliable in supporting IVS and other bandwidth munching applications. This would then need deployment across massive geographies from large cities to small towns. Unfortunately it comes down to a handful of historically reluctant companies and government departments with dominating control of their respective part in that equation to make their move.

The lifelines fuelling our insatiable demand for rich online content

- Infrastructure from backbone to “last mile” must increase capacity

- New intelligent data packet transport protocols and routing

- Removal of unnecessarily imposed data transmission caps and throttling

- Further advances in video compression technologies

- Point of service device advancements

At today’s typical 1080P HD or less resolutions, streaming providers like Netflix consume a sizeable chunk of all Internet traffic, imagine video files sized in the hundreds of gigabytes. Current packet transfer protocols would get bogged down attempting to deliver such a volume of data en masse. New transfer protocols, compression techniques and routing equipment would need to be deployed to route packets in priority, with extreme efficiency and adaptive intelligence. That’s a big ask.. given the agonisingly slow rollout of IPv6, a critical upgrade to the antiquated IPv4 Internet protocol that resolves an imminent exhaustion of available IP addresses we’ve known was coming for decades. Talk about procrastinating.

Likelihood of This Prediction

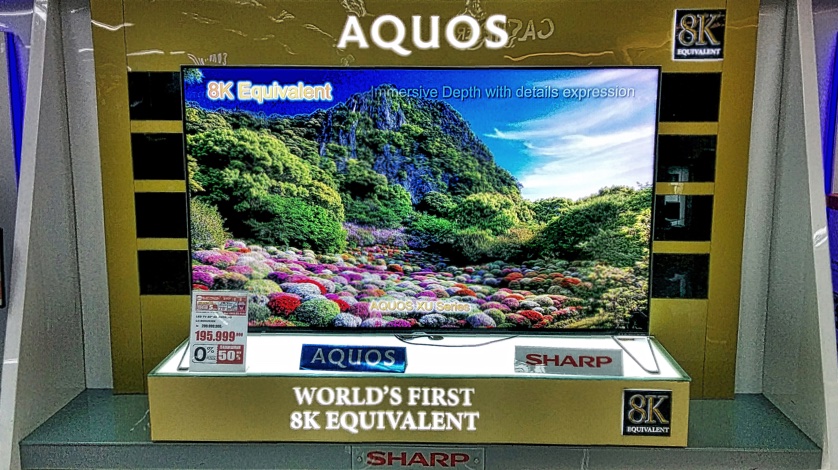

While “equivalent” is code for “not quite there”, it’s still capable of upscaling to 8K

If I’d told you twenty years ago that in the future you could commonly buy a television that’s 60 inches or larger and less than a couple inches thick, with a modest suburban family income, you’d have given me suspicious looks. Likewise if I’d told you that screens will be developed which are literally plastic sheets, that are bendable and virtually weightless, again odd looks and crickets chirping. This isn’t much different and the likelihood in a similar timeframe given the current trajectory of related technologies makes it highly probable and may very well even exceed these predictions. When you see retail showrooms with the next generation along side the latest generation televisions, it proves just how fast it’s all progressing.